In part 1, we looked at how humans perceive lighting and reflections – vital basic knowledge to estimate how realistic these cues need to be. The most important goal is that the scene looks natural to human viewers. Therefore, the virtual lighting needs to be closely aligned with real lighting.

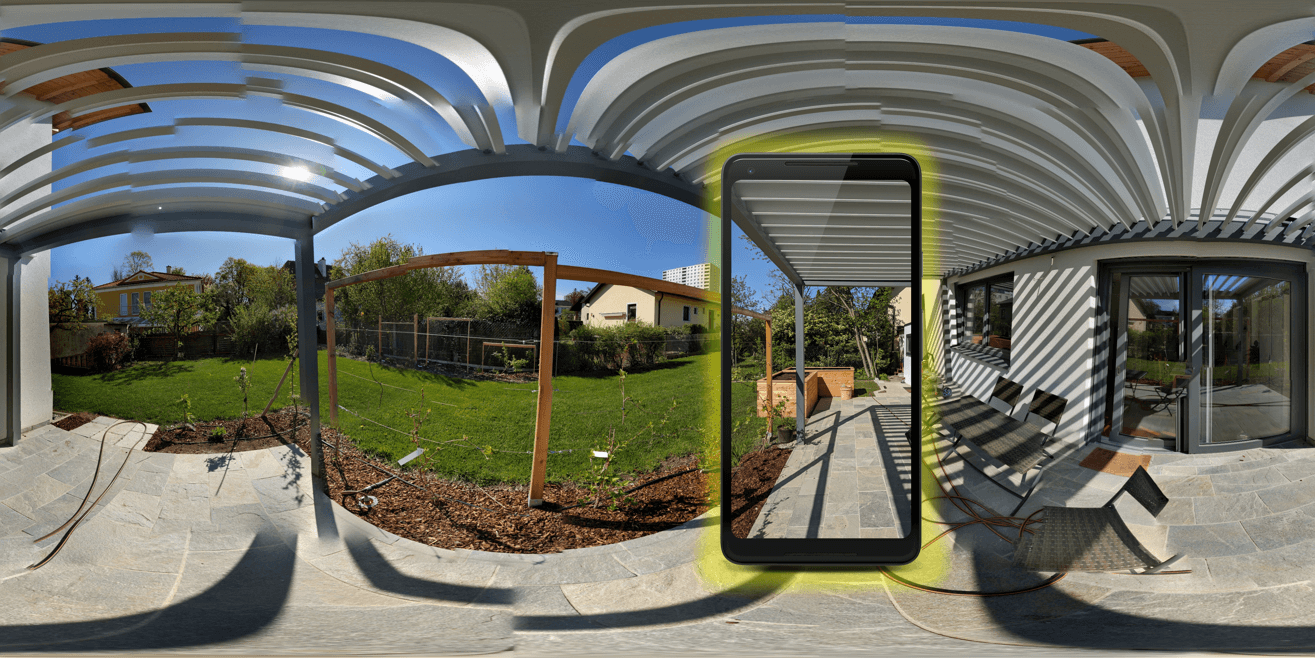

But how to measure lighting in the real world, and how to apply it to virtual objects?

Virtual Lighting

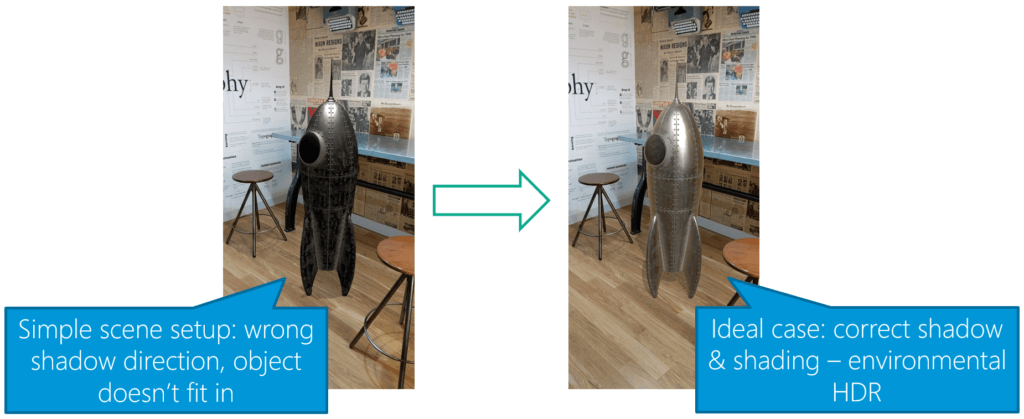

How do you need to set up virtual lighting to satisfy the criteria mentioned in part 1? Humans recognize if an object doesn’t fit in:

The image above from the Google Developer Documentation shows both extremes. Even though you might still recognize that the rocket is a virtual object in the right image, you’ll need to look a lot harder. The image on the left is clearly wrong, especially due to the misplaced shadow.

You must be logged in to post a comment.