Overall, the AR ecosystem is still small. Nevertheless, it’s fragmented. Google develops ARCore, Apple creates ARKit and Microsoft is working on the Mixed Reality Toolkit. Fortunately, Unity started unifying these APIs with the ARInterface.

At Unite Austin, two of the Unity engineers introduced the new experimental ARInterface. In November 2017, they released it to the public via GitHub. It looks like this will be integrated into Unity 2018 – the new features of Unity 2018.1 include “AR Crossplatfom Kit (ARCore/ARKit API)“.

Remote Testing of AR Apps

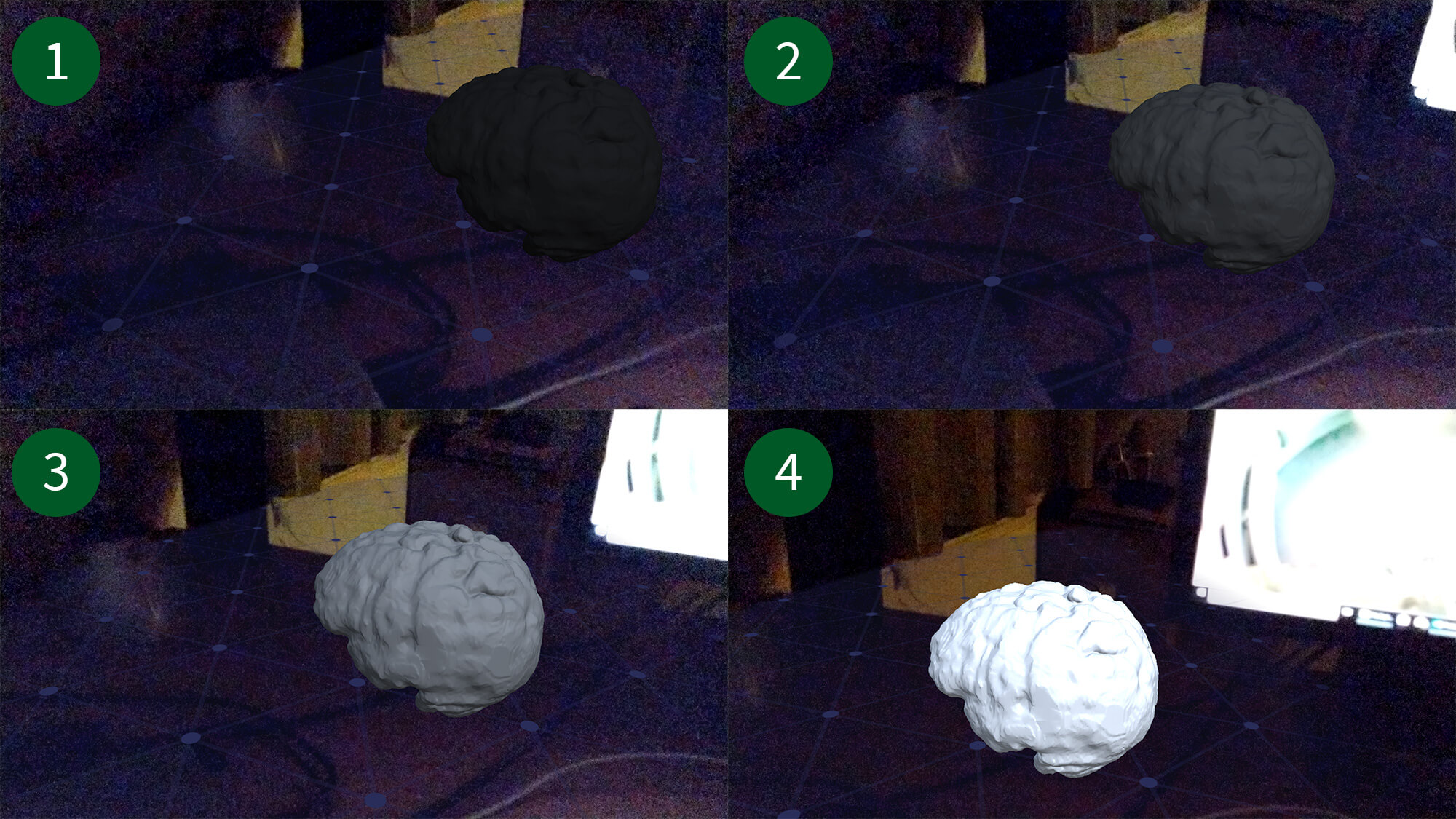

The traditional mobile AR app development cycle includes compiling and deploying apps to a real device. That takes a long time and is tedious for quick testing iterations.

A big advantage of ARKit so far has been the ARKit Unity Remote feature. The iPhone runs a simple “tracking” app. It transmits its captured live data to the PC. Your actual AR app is running directly in the Unity Editor on the PC, based on the data it gets from the device. Through this approach, you can run the app by simply pressing the Play-button in Unity, without native compilation.

This is similar to the Holographic Emulation for the Microsoft HoloLens, which has been available for Unity for some time.

The great news is that the new Unity ARInterface finally adds a similar feature to Google ARCore: ARRemoteInterface. It’s available cross-platform for ARKit and ARCore.

ARInterface Demo App

In this article, I’ll explain the steps to get AR Remote running on Google ARCore. For reference: “Pirates Just AR” also posted a helpful short video on YouTube.

You must be logged in to post a comment.