Updated: December 12th, 2022 – changed info from Microsoft LUIS to Microsoft Azure Cognitive Services / Conversational Language Understanding.

During the last few years, cognitive services have become immensely powerful. Especially interesting is natural language understanding. Using the latest tools, training the computer to understand spoken sentences and to extract information is reduced to a matter of minutes. We as humans no longer need to learn how to speak with a computer; it simply understands us.

I’ll show you how to use the Conversational Language Understanding Cognitive Service from Microsoft. The aim is to build an automated checklist for nurses working at hospitals. Every morning, they record the vital sign of every patient. At the same time, they document the measurements on paper checklists.

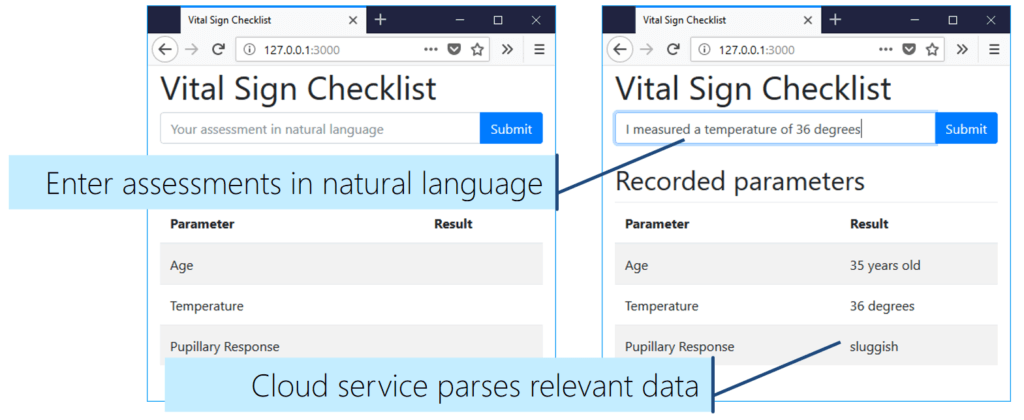

With the new app developed in this article, the process is much easier. While checking the vital signs, nurses usually talk to the patients about their assessments. The “Vital Signs Checklist” app filters out the relevant data (e.g., the temperature or the pupillary response) and marks it in a checklist. Nurses no longer have to pick up a pen to manually record the information.

The Result: Vital Signs Checklist

In this article, we’ll create a simple app that uses the conversational language understanding APIs of the Microsoft Azure Cognitive Services. The service extracts the relevant data from freely written or spoken assessments.

How is the app going to work?

For example, the nurse would say:

I just measured a temperature of 36 degrees.

The LUIS service analyzes this assessment text. Then, the app marks “36 degrees” in the “temperature” field of the checklist. What’s so amazing is that you can speak in natural language. The system goes beyond recognizing pre-defined text templates. Thus, it’ll also recognize:

The thermometer shows 36 degrees.

To achieve this, we’re creating a Node.js server that handles the interaction with Conversational language understanding. The user interface is a website styled with Bootstrap. It features an input area for the assessment, as well as a table that shows the results.

For simplicity, I didn’t integrate voice recognition – instead, you enter the assessment as text. However, adding live voice input would only be another cloud service to integrate into your app architecture. It’s a pre-processing step to transform the recorded voice to text.

The textual assessment is then sent to the language understanding service by the Node.js backend. In this article, I’ll show how to configure the service. It will recognize three distinct types of inputs:

- The patient’s age (-> prebuilt component: age)

- The measured temperature (-> prebuilt component: temperature)

- The pupillary reaction (-> list component)

Based on the natural text input, the conversational language understanding service classifies the top scoring intent (e.g., “Temperature”) as well as the according entity (e.g., “number”).

App Architecture

Between entering an assessment in the browser-based interface and receiving the extracted data from the analysis, the app sends several messages between the individual parts:

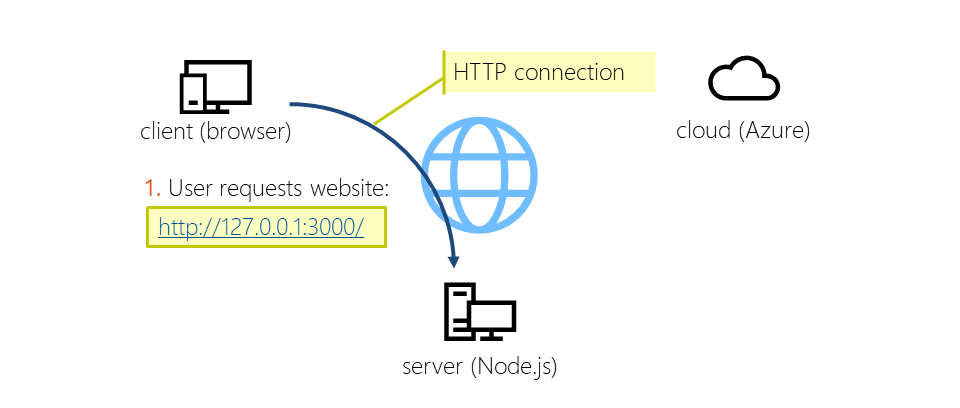

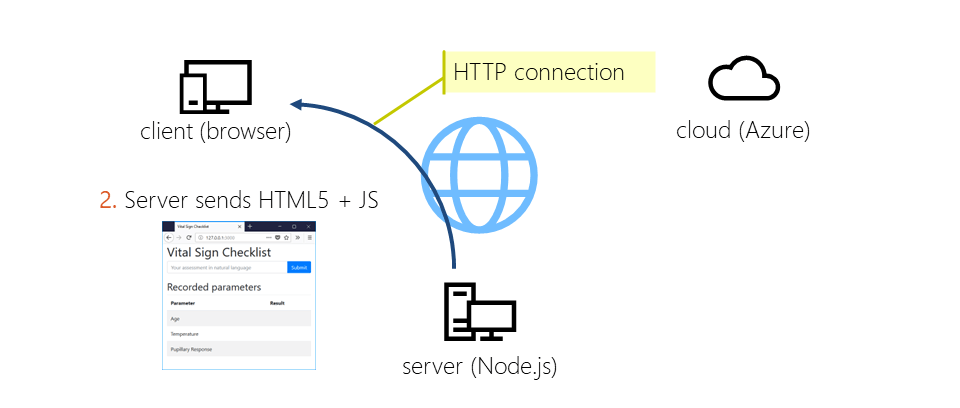

Loading the Website (1 + 2)

After starting the Node.js server, the user connects to the server through a web browser. During development, you’ll run everything locally – thus, you request a web page running at 127.0.0.1 (the local IP address). The communication is based on the HTTP protocol.

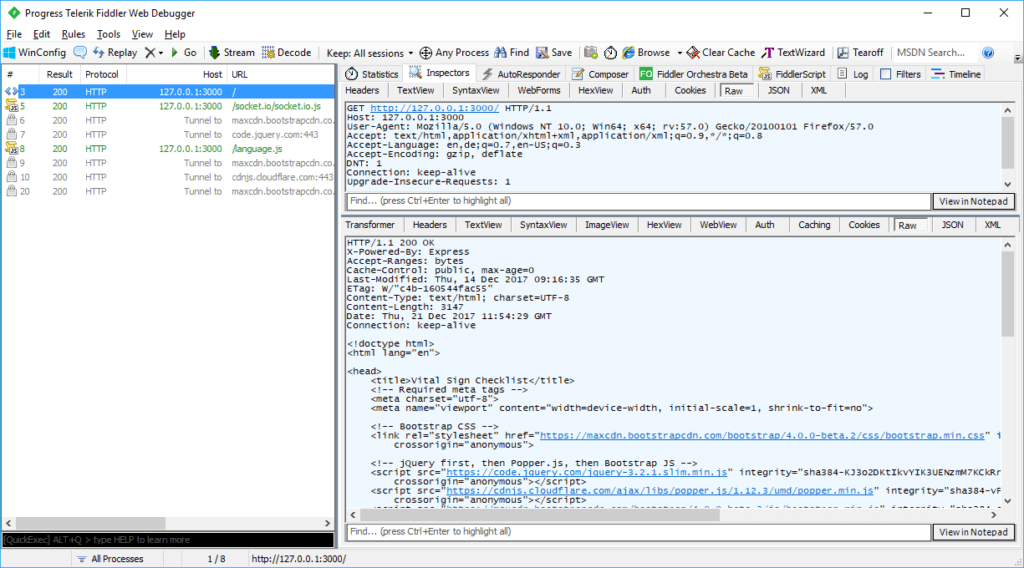

The following capture from Telerik’s Fiddler shows the traffic generated by the browser. First, the browser requests the main HTML web page. Afterwards, it loads dependencies, including our own “language.js” that contains the logic of the front-end. Additionally, it loads the pre-built libraries we use, including socket.io, Bootstrap and jQuery.

This image visualizes the HTTP response:

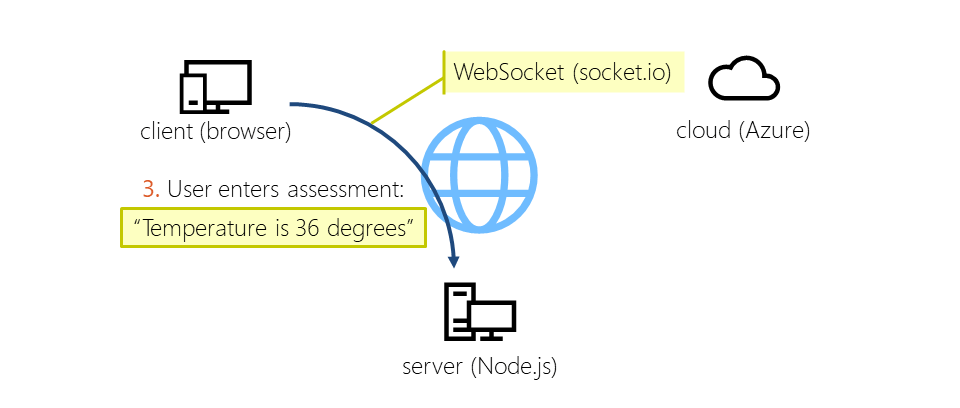

Sending a Message from the Client to the Server (3)

Now that the UI is visible in the browser, the user enters the assessment in the form and clicks on the submit button. This sends a message to the Node.js server using a WebSocket. To make message handling easier for us, we’re using the socket.io library.

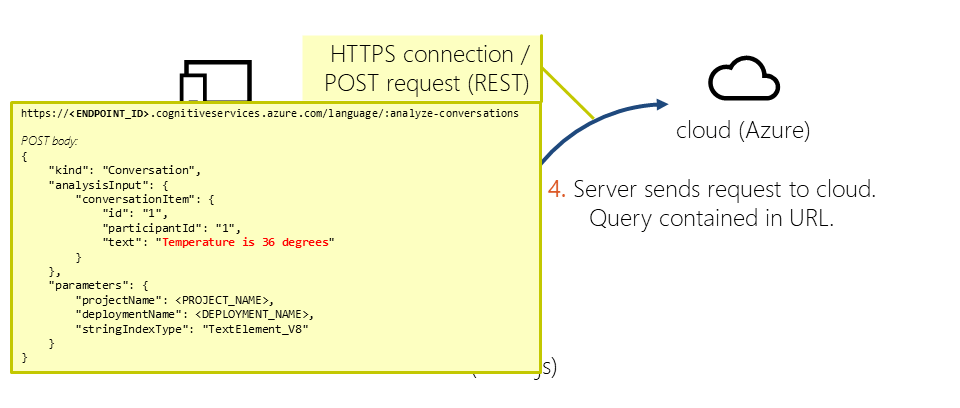

Communicating with the Cloud Service (4)

The server picks up the message and calls the conversational language understanding service in the Azure cloud. Under the hoods, this is a standard POST request over an encrypted HTTPS connection. The request contains all the necessary parameters: our app id, the subscription key, as well as the assessment entered by the user.

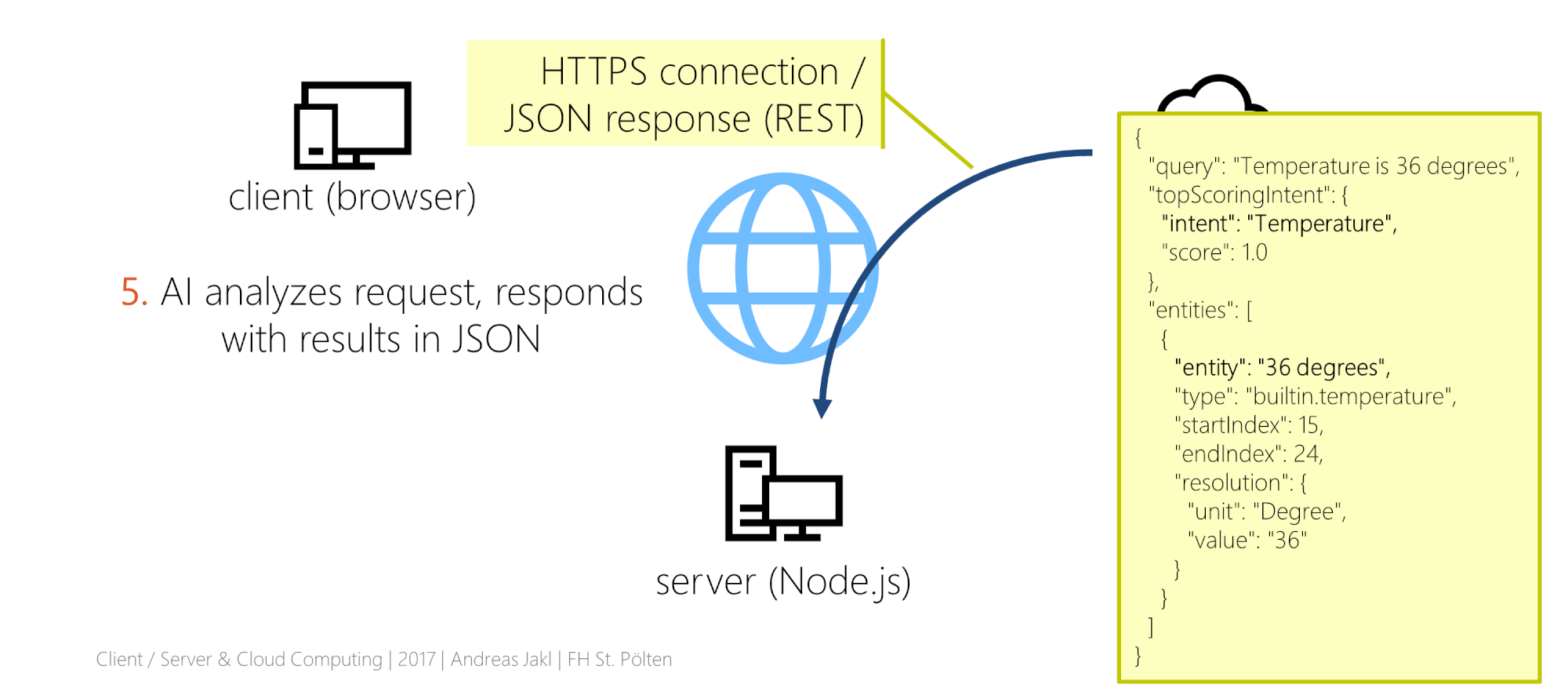

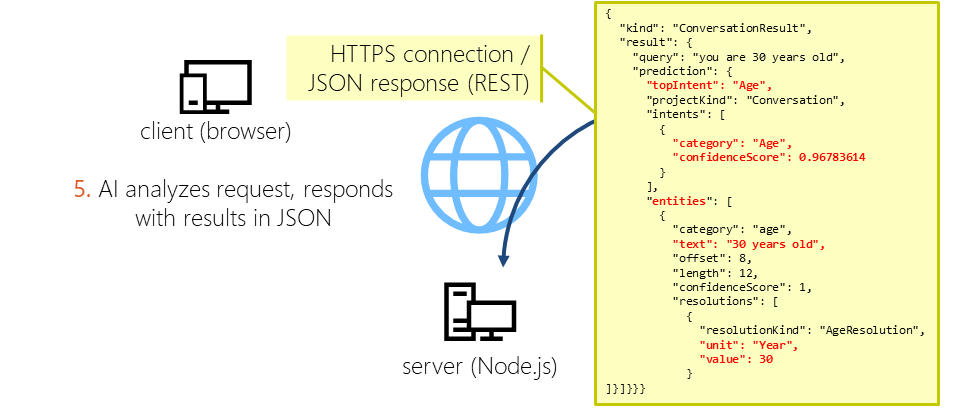

Receiving the Results of the Language Understanding Service (5)

The artificial intelligence service immediately analyzes the text. The result is a JSON sent back to our server as the response to the GET request.

From the complete analysis included in the JSON, we’re only interested in two items:

- The name of the top intent. That’s what the AI classified as the most likely result, based on our training.

- The entity, which contains the measurement extracted from the sentence.

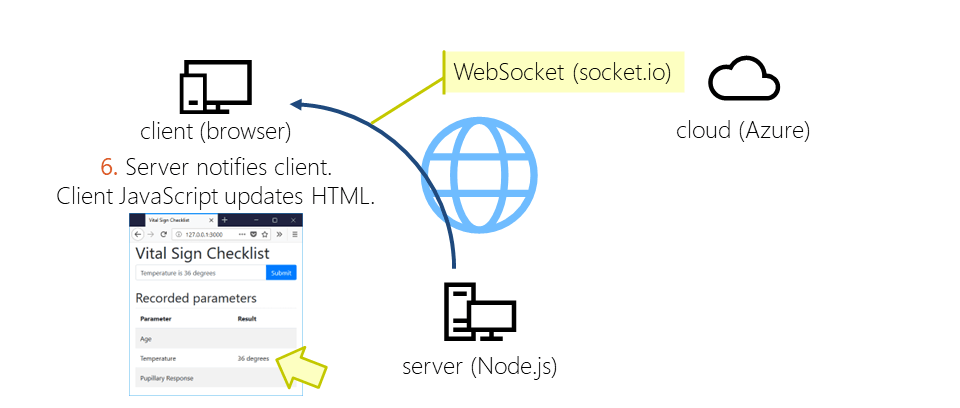

Updating the User Interface (6)

Finally, the server sends a message to the client containing the results. For the connection, it again uses a WebSocket. The message title is the name of the identified intent, the message content is the extracted measurement. The JavaScript code running on the client then updates the HTML user interface using jQuery.

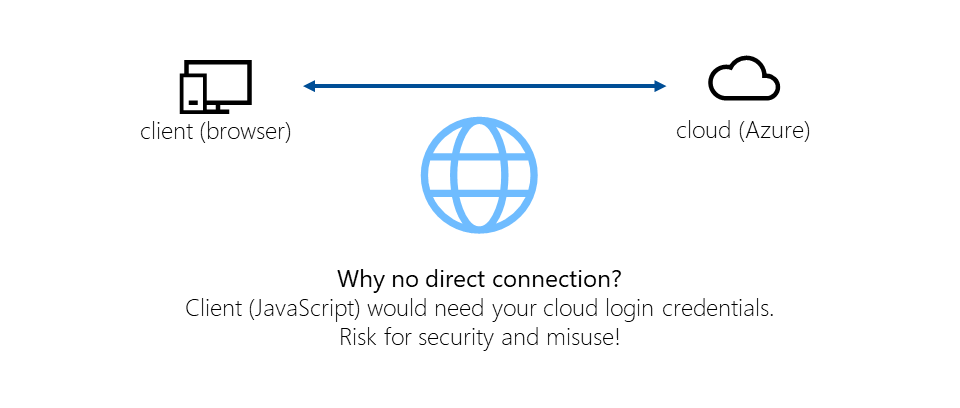

Why No Direct Connection between Client and Cloud?

The main question you might have: why do we need the intermediate step of the browser at all? Why not let the client directly communicate with the cloud service? It’d make the architecture a lot simpler.

There are several reasons. The most important is security. For the commercial variant of this app concept, you’d use the paid variant of the language understanding service. For obvious reasons, it’s a bad idea to send the secret service login credentials to the user.

There are several reasons. The most important is security. For the commercial variant of this app concept, you’d use the paid variant of the language understanding service. For obvious reasons, it’s a bad idea to send the secret service login credentials to the user.

Additionally, it’s easier for you to update one central service in case you need to improve the code, instead of updating an app installed on all the clients.

Next steps

In the next part, we’ll create the Node.js backend that handles all the communication between the client and the conversational language understanding service in the cloud.