In the final part of the HoloLens Spectator View series, I’ll add the final tweak to my sample project and show you what kind of photos the Compositor saves on your PC. Getting to this point has taken a lot more work than expected (+ a lot more blog posts, too!), but the journey was worth it! You can finally get great photos and videos of the mixed reality experience on HoloLens.

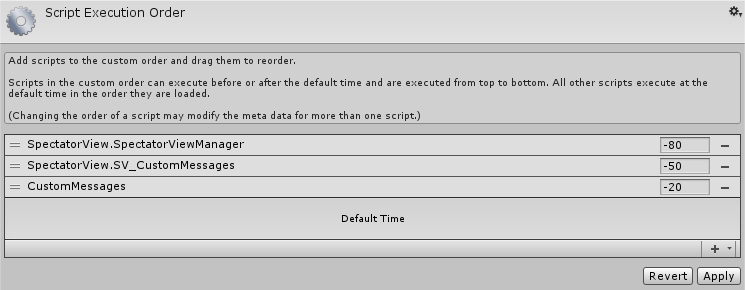

Script Execution Order

Sending the updates didn’t work right away, so I searched for other changes that the sample does differently from my app that might be responsible. One such case was the Script Execution Order.

In Unity, the order in which scripts are initialized is arbitrary. When debugging, I found an issue that an instance of a class wasn’t available yet when a script wanted to access it.

Turns out, the Sharing example defines some custom script execution order that ensures that networking code is executed first.

I don’t consider that good coding practice. Setting a script to load a few milliseconds before another one is a quick fix for a small issue, but it doesn’t scale well in a larger project.

But to make Spectator View work, let’s remove any potential issues and follow the sample. Go to Edit > Project Settings > Script Execution Order and define the following values:

Running HoloLens Spectator View: Mixed Reality

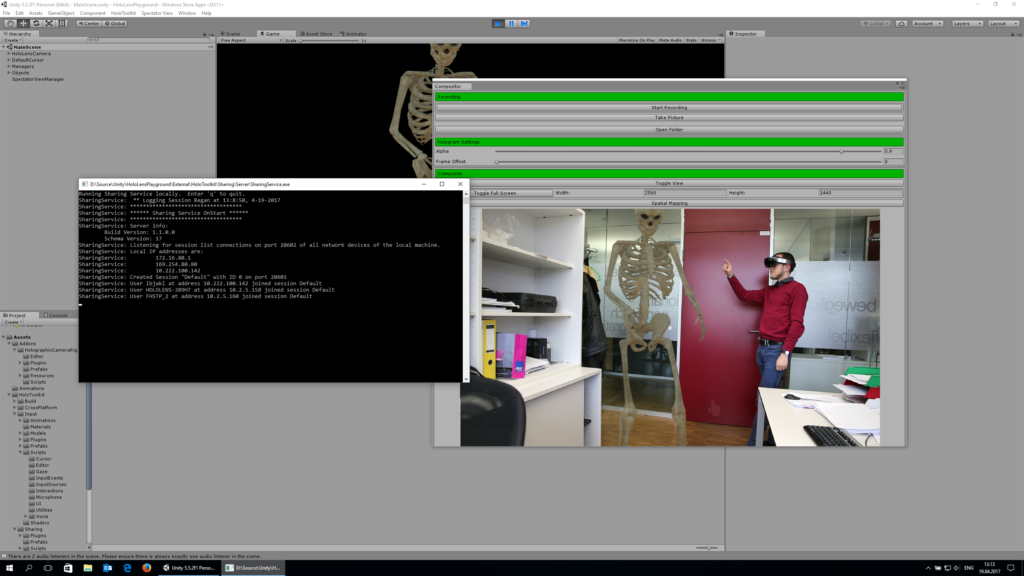

That was it – Spectator View is now working! The quality of the live stream / photos you get is of course *much* higher than the captured screenshots of the HoloLens itself. Additionally, as you see both the Mixed Reality content as well as the user, it’s much easier to understand what’s going on compared to a simple first-person screenshot.

The following image shows what it looks like on the PC when Spectator View is running. The command line window is the sharing server, which shows that 3 users are part of the session: the PC with Unity, the HoloLens mounted on the DSLR, as well as the HoloLens that I’m wearing in the photo.

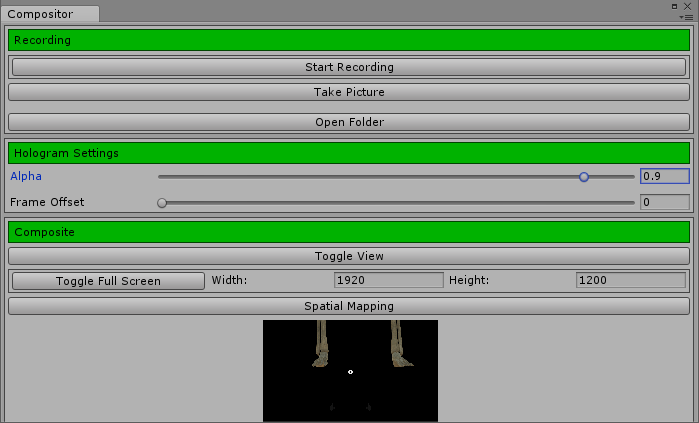

In the Compositor window, you can change an important setting: the Alpha value. By default, it’s set to 0.9, which lets the background shine through the rendered contents a little bit. That’s closer to the real HoloLens view, where the hologram isn’t completely covering reality. How opaque the holograms in the real HoloLens are depends mostly on the lighting situation; but 0.9 works nicely for photos.

Using the buttons at the top of the compositor window, you can start recording a video or take a picture.

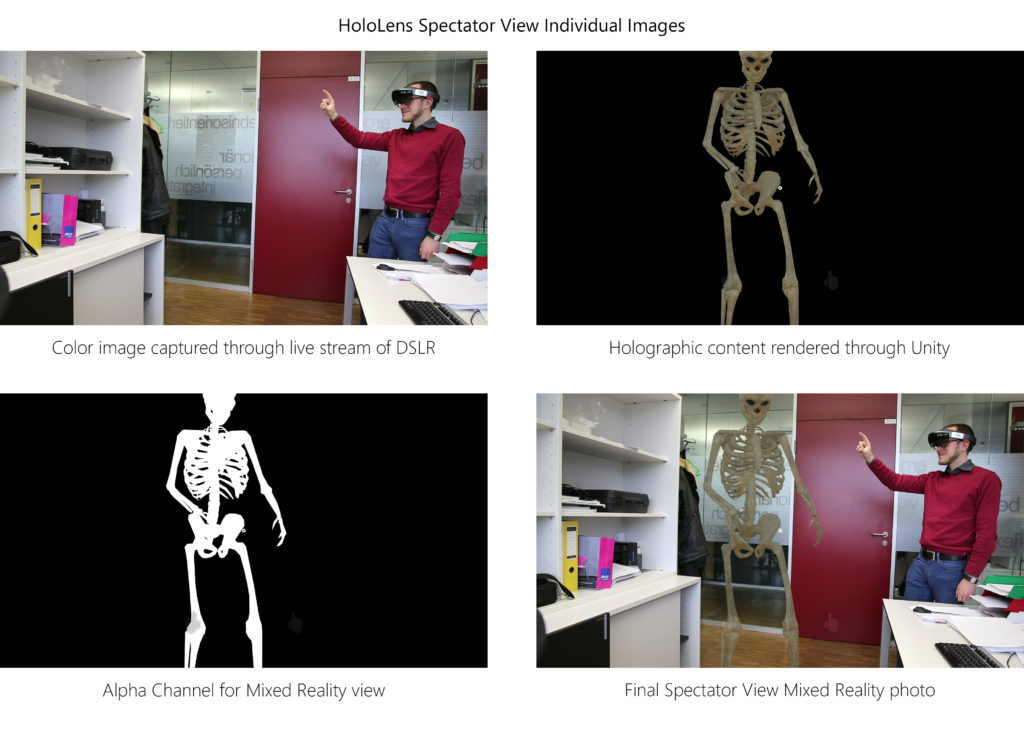

Capturing a picture actually saves four distinct image files:

Capturing a picture actually saves four distinct image files:

- First of all, the Spectator View tool saves a color image of the live stream of the DSLR (in Full HD resolution).

- Secondly, it saves the 3D-only rendering made by Unity, in the same resolution and with a perspective matching to the DSLR (this is why we did the calibration earlier).

- Thirdly, the alpha channel image contains the area of the hologram.

- Finally, the compositor also saves the merged mixed reality photo, combining all three images into one.

What’s Next?

Right now, the setup captures a Full HD live stream from the DSLR camera. Of course, the quality is already much better than the built-in camera of the HoloLens. However, you can improve the quality & resolution by taking a full-res photo by activating the Canon SDK.

To properly save & remember the hologram position in the real world, you should re-activate the world anchors for the objects using the synchronous method I mentioned in the previous blog post.

Unfortunately, the Spectator View tools are far from stable and it’s a bit tricky to get the system to work. I’ll soon post a follow-up troubleshooting blog posts with some workarounds that I’ve found. This includes how to improve synchronization in case there is a considerable offset with regards to the object placement.

But in general, Spectator View works and it’s amazing to be able to capture videos and high-quality photos of how the mixed reality experience feels like for the HoloLens user!

HoloLens Spectator View blog post series

This post is part of a short series that guides you through integrating HoloLens Spectator View into your own Mixed Reality app: