Updated: January 18th, 2023 – changed info from Microsoft LUIS to Microsoft Azure Cognitive Services / Conversational Language Understanding.

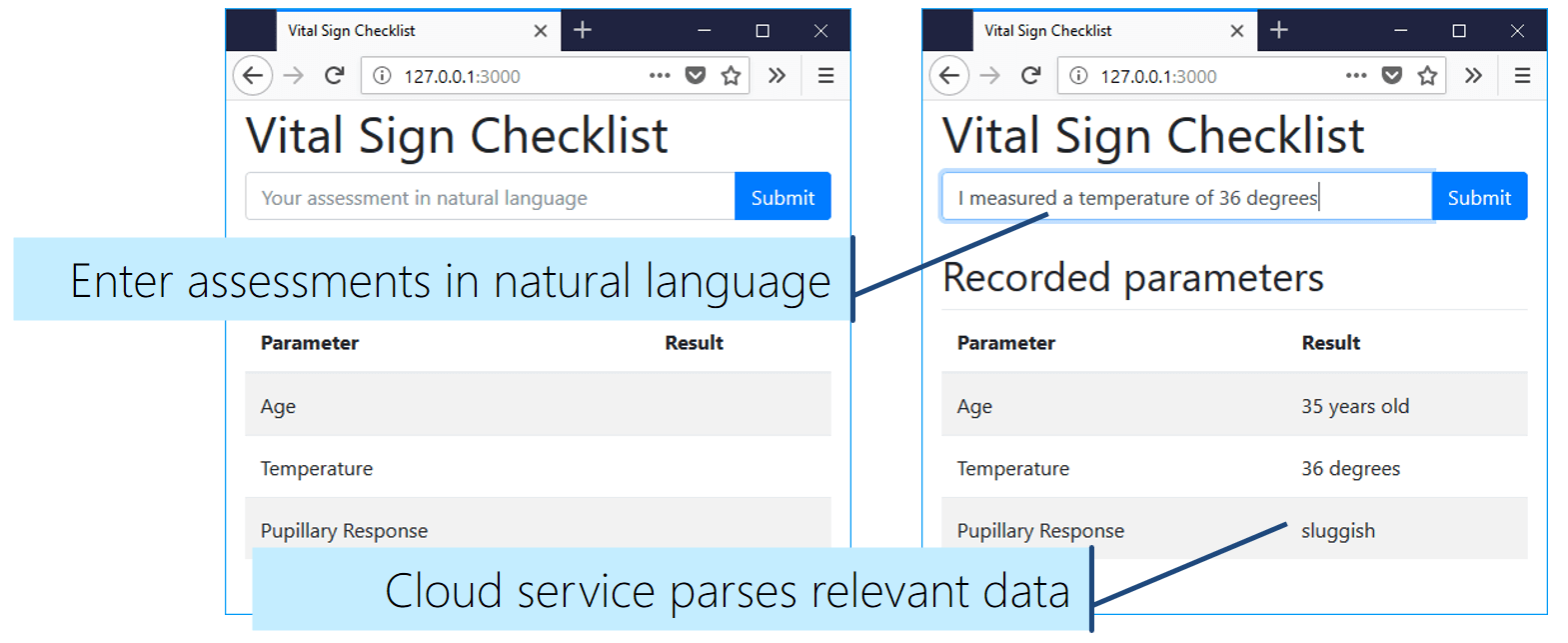

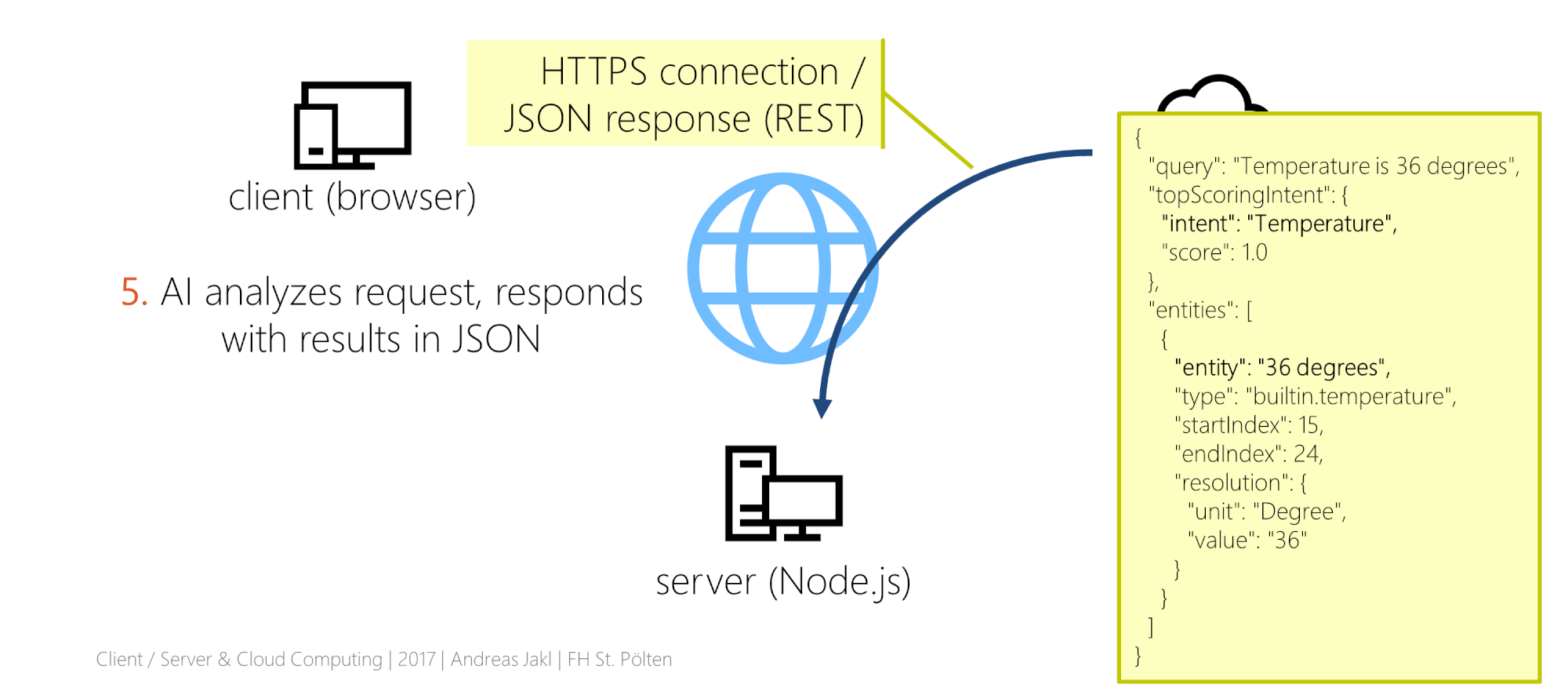

In this last part, we bring the vital sign checklist to life. Artificial Intelligence interprets assessments spoken in natural language. It extracts the relevant information and manages an up-to-date, browser-based checklist. Real-time communication is handled through Web Sockets with Socket.IO.

The example scenario focuses on a vital signs checklist in a hospital. The same concept applies to countless other use cases.

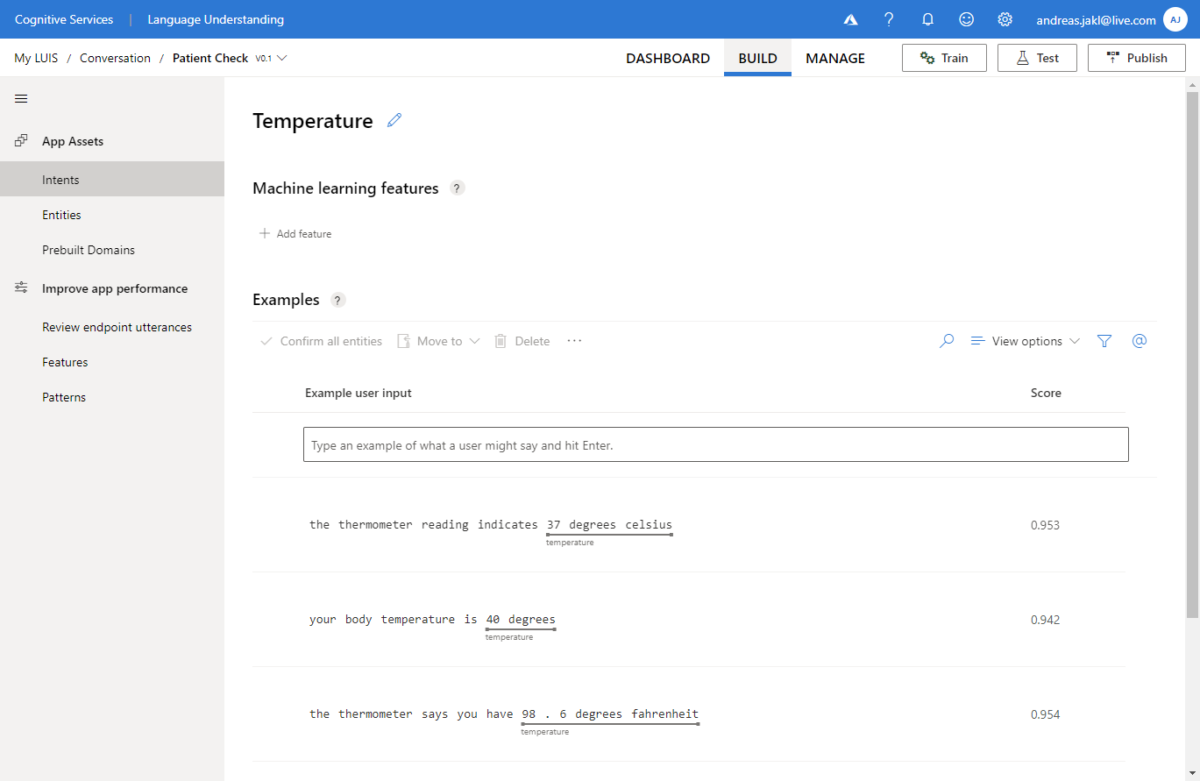

In this article, we’ll query the Microsoft Azure Conversational Language Understanding Service from a Node.js backend. The results are communicated to the client through Socket.IO.

Connecting Language Understanding to Node.js

In the previous article, we verified that our language understanding service works fine. Now, it’s time to connect all components. The aim is to query our model endpoint from our Node.js backend.

You must be logged in to post a comment.