In an Augmented Reality scene, users looks at the live camera feed. Virtual objects anchor at specific positions of the real world. Our task is to let the user place virtual in the real world. To achieve that, the user simply taps on the smartphone screen. Through a hit test, our script then creates an anchor in the real world and links that to a virtual 3D model entity.

That’s the high level overview. To code this anchoring logic, a few intermediate steps are needed:

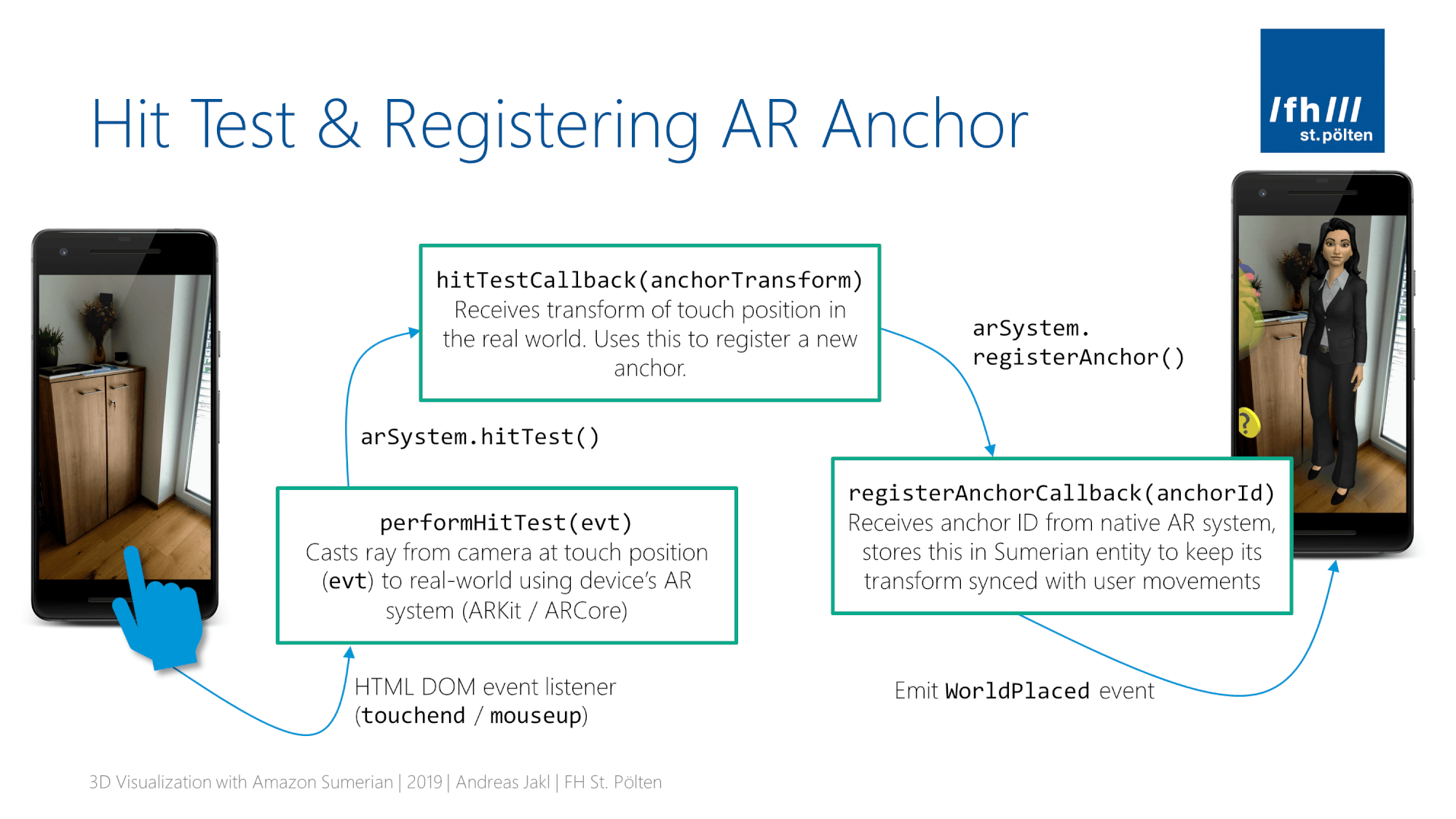

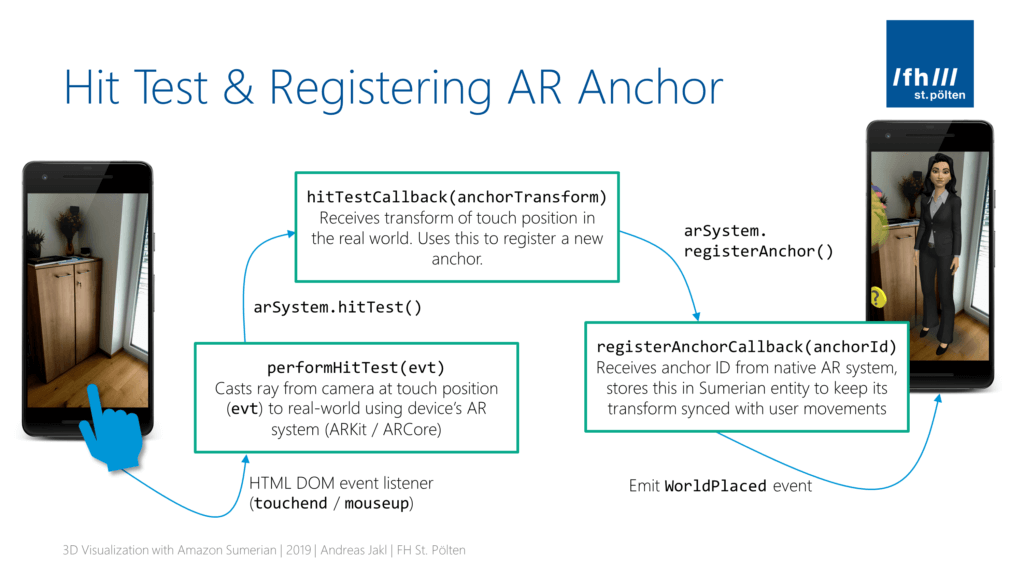

- Hit Test: converts coordinates of the user’s screen tap and sends the normalized coordinates to the AR system. This checks what’s in the real world at that position.

- Register Anchor: next, our script instructs the AR system to create an anchor at that position.

- Link Anchor: finally, the ID of the created anchor is linked to our entity. This allows Sumerian to continually update the transform of our 3D entity. Thus, the object stays in place in the real world, even when the user moves around.

Transforming these steps into code, this is what our code architecture looks like. It includes three call-backs, starting with the touch event and ending with the registered and linked AR anchor.

Hit Test in Augmented Reality

If our scene is in place mode and the user taps the screen, we want 3D models anchor at that real-world position. Let’s start coding the first call-back. It is invoked whenever the user taps on the screen.

There’s some magic involved: from the HTML DOM, we just get the 2D touch coordinates. Using those 2D coordinates, our script then converts them, considering the device pixel ratio as well as the viewport width and height.

In the end, we get normalized image coordinates in the range from 0.0 to 1.0. These normalized coordinates are required for letting the device’s AR subsystem (ARCore / ARKit) perform a hit test.

The hit test then performs a raycast from the camera’s eye point. This imaginary ray shoots straight forward, starting at the normalized screen coordinates, until it hits the first object in the real world. We then get the transform (= the position) of where the ray hit the real-world object. Usually, this will be a point on the floor / wall.

In the setup code of the previous article, we already stored two references to Sumerian-internal components as class instance properties.

- Renderer: To get conversion data for the screen, we access the Renderer. It’s responsible for displaying the graphics data to a rendering context. For us, it provides the device pixel ratio, as well as the viewport width and height.

- ArSystem: Sumerian’s glue between its JavaScript APIs to the device specific native AR APIs. We use it to trigger the hitTest.

As the hit test is performed by the native system, our script is again signing up for a call-back to get informed when it’s finished. This hitTestCallback receives the hit position (anchorTransform) as parameter.

This is the code to add to your script. It combines all the steps outlined above. Put it outside of the start(ctx) function, but inside our AnchorPositioning class.

Register Hit Test Callback for Touch Events

Our call-back needs to be registered with the system. This happens in the start(ctx) method, which we created in the previous part of the article. Now, extend it to add an event listener.

Two aspects are tricky: the scope of .this, as well as accessing the HTML DOM within the Amazon Sumerian scene.

Scope of .this in JavaScript Call-backs

Quick recap: In the setup code, our script received a parameter containing the context (ctx). Through the context, our script accesses various Sumerian components. We saved the ones we need into class instance properties, accessible through .this in JavaScript.

Now, because of the tricky way JavaScript works, a call-back from a click event listener will actually run within the scope of the calling function. This is not our object. Thus, our call-back function can’t access everything we saved as instance properties through .this.

There are two possible solutions. The first: using bind(). The second: using arrow functions / lambdas. Keep in mind that we’ll also need to de-register the click listener from the HTML DOM when our script ends. Therefore, a completely anonymous lambda function wouldn’t work for us.

I went for an arrow function, which I stored in a variable. This allows to de-register the event listener once the script stops. performHitTest(evt) is a function of our AnchorPositioning class, and is therefore also accessed through this.

Access the HTML DOM within Amazon Sumerian

Next, we wire up the call-back with the touchend event from the HTML DOM. It gets executed when the user releases the touch. How can we register an event with the HTML DOM?

The simplest way would be the MouseUpAction of Amazon Sumerian. However, this doesn’t provide us with the touch coordinates. The pre-defined action only informs us whenever a touch / click has been released.

Therefore, we again need to dig into the internals of Amazon Sumerian. It works through the internal world object that we already retrieved. This contains the sumerianRunner, which updates the world and calls the renderers. The renderer is the glue to the HTML DOM. Through it, we access the domElement.

Finally, we’re in the actual HTML world that our 3D scene lives in. The rest is standard JavaScript: we add an event listener for the touchend event. If you like, you can also add a listener for mouseup to get a bit farther with testing on a PC. However, real testing of AR is only possible on a device.

Removing the Event Listener

When working with Amazon Sumerian, be especially careful about cleaning up. You’ll repeatedly play and stop your 3D scene within Sumerian’s web editor interface. If you leave listeners registered, this could lead to situations where a click in the scene also executes old variants of your script code. These issues are very tricky to find. If you suspect this could be happening, reload the browser window.

The better idea is to prevent this from happening. Our script has a start(ctx) function, which is called at the beginning of its life. Within this function, you can register for an onStop() callback:

Registering the AR Anchor

Now that the hit test is properly wired up, we can proceed to the next overall step. Our script sent the normalized screen position to the AR system of the device. This performed a raycast from the camera to its model of the user’s world.

That process can have two possible outcomes:

- Hit: the hit test actually found on a place in the real world that the AR system is aware of. In this case, we get the transform of the position where the ray intersects that place. We use this to register an anchor at that position, which ultimately moves the 3D entity there.

- No hit: the ray didn’t intersect with anything. In our script, we simply don’t react to that scenario and let the user try again. You could of course provide more detailed feedback to the user.

Registering an anchor again works through Amazon Sumerian’s ArSystem. The registerAnchor() function requires a transform as parameter. Through yet another callback, our script will receive the ID of the created anchor or null if registration was not successful.

Linking the Anchor ID to the 3D Model Entity

In case registering the anchor was successful, the native AR system returned an anchor ID. We need to save this with the Sumerian 3D model entity that should be linked to the anchor. This ensures that for every future frame, Sumerian can go through all tracked anchors and update the transform of its linked entity in the scene.

How to link the anchor ID? During the setup phase, we already created and attached an ArAnchorComponent to the entity we want to link. This class has an anchorId property. This stores the Anchor ID. After setting this, we’re technically done!

The remaining steps are for our internal scene logic. As described in part 2, I decided to implement two different signaling mechanisms.

- First, a global status variable called

placeModethat is set totruewhile placement mode is active. - Second, events that signal whether placement mode starts or finishes.

That way, other components can decide if they simply want to check a global variable to allow / disallow an action as it occurs; or if they want to perform more complex actions on their own when the placement mode changes by subscribing to the events.

After the anchor is registered, our script therefore sets the global placeMode variable to false and then emits the worldPlaced event. Of course, depending on the logic of the rest of the scene, the user is free to enter the mode again any time.

Kicking off the script: StartPlaceWorld Event

But how to enter the AR placement mode? That’s the only component still missing. As part of the start(ctx) function, our class needs to subscribe to the StartPlaceWorld event.

The StartPlaceWorld event has to be emitted by another part of your scene – whenever you want the user to re-position an object. As described in part 1, in the Digital Healthcare Explained app, it is triggered when the user taps the host. But you could use any other user interface element to emit that event.

Conclusion

The process of attaching an AR anchor to a 3D entity isn’t easy. The official tutorial is currently still based on the legacy scripting format of Amazon Sumerian. While the new scripting API is much more streamlined by embracing ES6, updating that script was a lot more complex than I initially thought.

While the previous scripting language made everything public, the new system distinguishes between public and internal APIs. Even though it is possible to access internal APIs, you need to understand how everything fits together. Most scenarios should be doable using the standard Sumerian APIs. This case however requires a massive use of internal APIs, plus several calls to the device native AR system.

The good news is that this article series explained everything that goes on behind the scenes, starting with the scene structure, up to the glue between the native AR APIs and your JavaScript code.

In case you just need a solution that works without learning about all the details, simply copy the finished script into your scene. It works out of the box. You just need to emit the StartPlaceWorld event from another component in your scene. The rest is taken care of.

For reference, this is the complete AnchorPositioning.js script: