Every new product or service claims to use deep learning or neural networks. But: how do they really work? What can machine learning do? How complicated is it to get started?

In the 4-part video series “Deep Learning Hands-On with TensorFlow 2 & Python”, you’ll learn what many of the buzzwords are about and how they relate to the problems you want to solve.

By watching the short videos, your journey will start with the background of neural networks, which are the base of deep learning. Then, two practical examples show two concrete applications on how you can use neural networks to perform classification with TensorFlow:

- Breast cancer classification: based on numerical / categorical data

- Hand-written image classification: the classic MNIST dataset based on small images

In the last part, we’ll look at one of the most important specialized variants of neural networks: convolutional neural networks (CNNs), which are especially well-suited for image classification.

Watching all four videos gives you a thorough understanding of how deep learning works and the guidance to get started!

Part 1: Machine Learning Explained: Neural Networks & Deep Learning

- How do Neural Networks make decisions?

- How does the iterative learning process work?

Starting with just a little bit of mathematics that should still be familiar from school, this video introduces the basic building blocks of Neural Networks step-by-step. Based on many examples, the effects and results are immediately visible.

When you combine all these little steps, you will see that the basic concept behind Neural Networks is not so complicated.

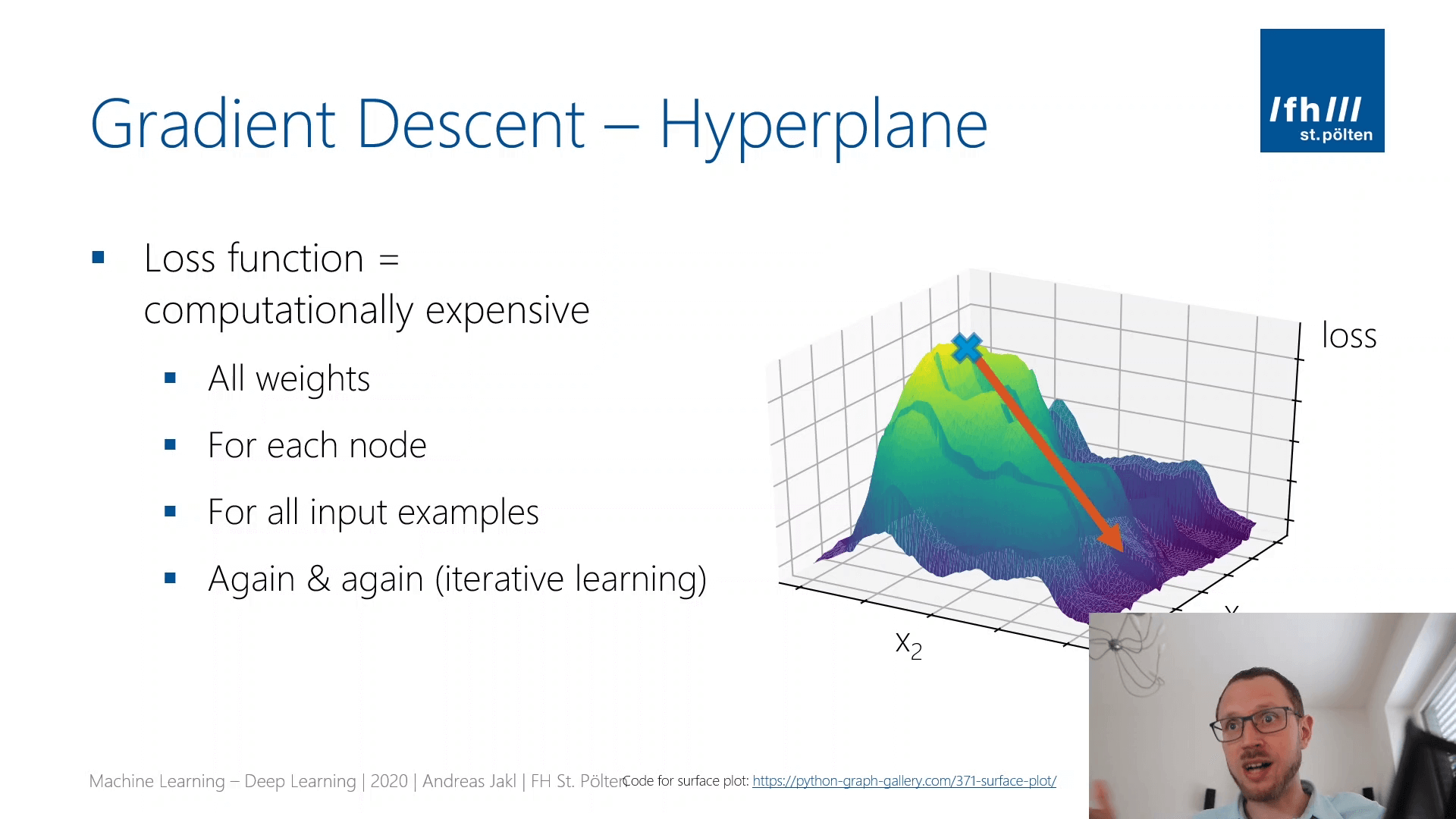

Based on this background knowledge, you will understand the most important concepts that are required to build your own neural networks for deep learning problems, as explained in the following videos of this series. These include: perceptrons & neurons, activation functions, weights and bias, error & loss functions, gradient descent, backpropagation, overfitting.

Part 2: Machine Learning: Deep Learning Hands-On with TensorFlow

Hands-on coding with explanations: how to classify the Breast Cancer Wisconsin Dataset from Scikit-Learn with TensorFlow.

The tutorial is based on a JupyterLab (Jupyter Notebook) with Python 3. We will build a neural network with one hidden layer (8 nodes, ReLU activation) and one output node (Sigmoid activation). As you will see, this already gives satisfactory results. In addition, our neural network is still small enough so that you can train it using a normal laptop.

As an intro after the previous theory module, network architectures are explained using the TensorFlow Playground.

Part 3: Handwritten Image Classification with the MNIST Dataset

Hands-on coding with explanations: how to classify the MNIST dataset with Tensorflow 2. Based on JupyterLab (Jupyter Notebook) with Python 3.

Building a neural network with a flattening layer, two hidden layers (300 / 100 nodes, ReLU activation) and one output layer with ten nodes and a softmax activation.

Predicts probability for each of the 0..9 handwritten numbers. At the end, the summary compiles the main concepts of a neural network for deep learning.

Part 4: Convolutional Neural Networks Explained

Overview of how Convolutional Neural Networks (CNN) perform classification.

CNNs work exceptionally well with images. As many of today’s scenarios have images as base, CNNs have become one of the most important specializations of Neural Networks.

Instead of extracting features from images in a pre-processing step, CNNs usually work on the raw image data. Through training kernel matrices, they try to find structure and patterns in the image, which are then ultimately useful for performing the actual classification.

CNNs have many applications, ranging from medical scenarios (MR / CT / Ultrasound analysis, detecting skin cancer from images, etc.), as well as in other areas (describing objects in the image / view for people with impaired vision, up to self-driving cars).

For many machine learning scenarios, cloud services provide ready-made solutions. I’ve already written a short tutorial on the Microsoft Azure Cognitive Services. A few lines of JavaScript are enough to retrieve a full photo description!